In my Cyber Physical Systems EE seminar this semester, we had a guest speaker, Dr. Yanzhi Wang, present his research on stochastic computing based DNN. I found the talk fascinating and wanted to share what I’ve learned.

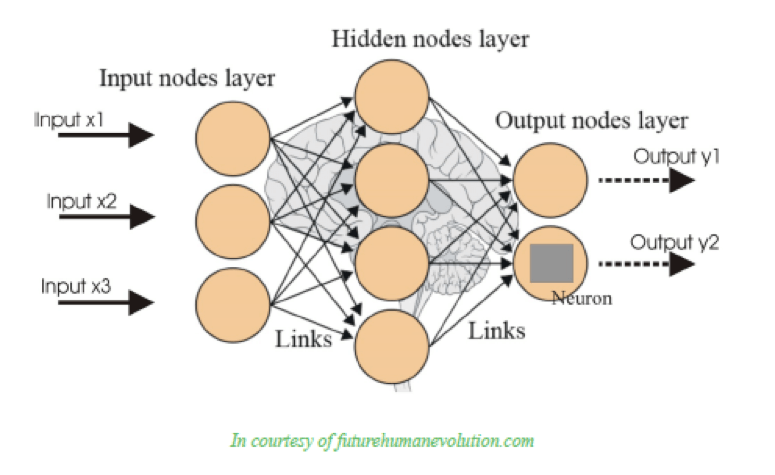

Deep neural networks (DNN) have exploded in the last decade as a research and commercially applicable area of machine learning, where a system can automatically learn complex information from large networks and large data sets and extract representations or classifications of that information at various levels. DNN is the most commonly used type of artificial neural network (ANN). ANNs are based on biological MIMO (multi-input, multi-output) neural networks with hidden node layers in between the input and output layers (see top photo).

There is a need for a design and optimization framework for low-power deep convolution neural networks (DCNN), a type of DNN, to be used in embedded and portable systems. Currently “smart” mobile devices and the Internet of Things trend is growing in commercial popularity and usage. Software-based DCNNs have reached maturity and commercial viability in a range of applications like image classification, Google text translation, speech recognition, etc. However, their major disadvantage is that they require high energy consumption and high computational power. Software DCNN implementation needs large, high performance server clusters on the back-end but this is not feasible for ultra low-power embedded or portable IoT systems.

As a biomedical engineer, I didn’t immediately see how DNNs, apart from their name, relate to my field. However, wearable health devices, remote monitoring of patient conditions, implantable glucose sensors for continuous monitoring are all examples of biomedical devices with “smart” software, part of the Internet of Things trend. Thus, it is important I understand how DNNs work so I can design better devices knowing the limitations or possibilities of the software.

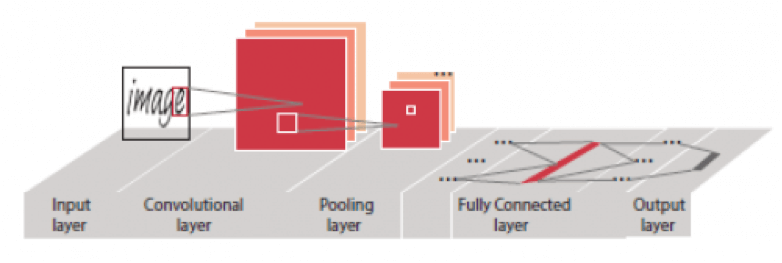

The innovative part of Dr. Wang’s research was using stochastic computing to develop a hardware implementation of DCNN using a bottom-up approach (SC-DCNN). Stochastic computing implements many arithmetic operations using the simple logic of an AND gate or a multiplexer, thus reducing hardware complexity, increasing scalability and significantly increasing energy efficiency. In addition, it is error resilient and has a high clock frequency (increased speed). The DCNN used here has optimize the three main operations (function blocks) – convolutions, pooling, and fully connected layer (as seen in the second picture). Any DCNN architecture starts with the input layer, extracts information and performs convolution, does pooling, forms a fully connected layer where the cognitive representations are formed and sends it to the output layer.

An interesting function I would like to see is applying this SC-DCNN implementation in the healthcare industry to see how it could improve the process of inputting data into electronic health records using voice recognition or provide translation of discharge reports for patients for whom English is not the primary language.

Published on December 14th, 2016

Last updated on August 29th, 2017